Hong-Ann Do

Professor Cassel

English 1201

23 Apr. 2017

A Realistic Look at Artificial Intelligence

What do most people think of when they hear the term “artificial intelligence?” If the movies are to be believed, artificial intelligence is when robots take over the world, like in Terminator or The Matrix. Though these are just movies, many people still believe a harmful artificial intelligence (AI) singularity, which is a machine or program that possesses higher than human intelligence, is a strong possibility in the future. However, this belief is unfounded; it is spawned by media hype and a lack of understanding of the field of AI. Currently, AI research is nowhere near the creation of a general intelligence machine and is focused more on specialized tasks, such as image recognition. AI research offers many potential benefits, especially to the fields of software and medicine. Even though there are some practical problems to consider, AI will continue to progress, and its development will greatly contribute to advancing technology and the world.

Artificial intelligence is already improving many fields and will continue to offer even more benefits in the future. One such example is Google Translate. Google Translate is a product that is known for giving stilted, awkward translations that usually do not accurately portray how people speak in a given language. Recently, Google Brain, a branch of the company that specializes in machine learning, has overhauled Translate into an AI driven system. This has resulted in much smoother translations. Sundar Pichai, Google’s chief executive recently showcased this at a conference in London. Demonstrating with a Spanish-to-English translation by the old system, Pichai read aloud a fairly awkward sentence, “One is not what is for what he writes, but for what he has read.” Yet, the new AI translation read, “You are not what you write, but what you read” (Lewis-Kraus). This stark contrast shows the vast improvement that AI has already made in language translation. In addition to language, Google is researching how AI can improve a variety of other tasks. Its DeepMind project uses an AI system that learns how to play video games and recently beat the world champion in a game of Go (Dubhashi 44). In 2012, the company debuted its autonomous driverless cars, which can navigate on their own through traffic (Kaplan 38). Many other big technology companies are also taking an interest in AI, such as Facebook, Amazon, IBM, and Microsoft (Markoff). Many advances in AI have mainly been in the field of machine learning. In this specialized field, AI has improved tasks such as tracking objects, diagnosing diseases, and trading stocks (Kaplan 37). A machine learning program made by Stanford was able to discern new indicators for breast cancer by analyzing tumor scans (Philbeck). Although these tasks may seem unrelated at first, all of them are based around finding patterns in data. AI has proven to be much better at processing large amounts of data than humans and can save people a lot of time by taking over mundane tasks that involve analyzing data.

Some of the imagined risks about AI are attributable to a lack of knowledge. The notion of an AI singularity that will become self-aware and intentionally do harm to humans is overblown by the media. The statements of several respected scientific figures outside of the field, such as Elon Musk and Steven Hawking, have also lead credence to this unfounded idea. AI researchers that work within the field realize that the possibility is quite non-existent. Instead, they are more focused on the more practical tasks and challenges of AI (Kaplan 36). In addition, AI technology is so new that making such broad statements is premature. Unlike other threats such as climate change and gene editing where more research has been done and there is a much better understanding, AI research is relatively green, and it is too early to make a case for the AI singularity (Dubhashi 43-44). AI, like many emerging technologies, goes through hype cycles. AI research has typically gone through periods of extreme interest called “bubbles” and periods of a lack of interest called “winters.” During bubble periods, expectations tend to inflate the abilities of the new technologies and over exaggerate the possibilities, leading to misinformation (Cristianini). The bubble period and heightened interest has amplified the current media hype over an AI singularity.

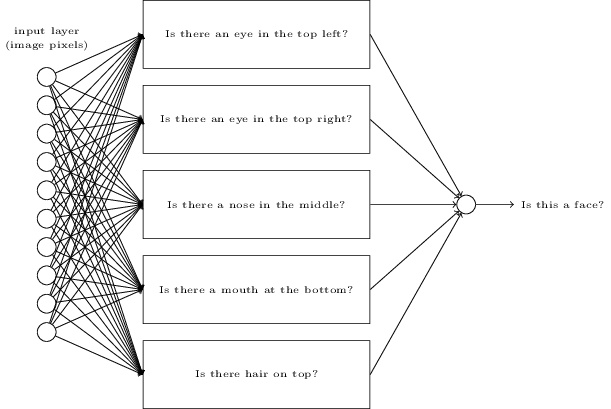

In order to better describe how AI can help the future, a definition of AI and an explanation of its progress is warranted. Intelligence is a loose term, and the definition of it in AI has been well debated. For example, if Google Maps was introduced to someone in the 1970, it would certainly seem like a form of AI that is well-verse in direction giving. Yet today, Google Maps just seems like an automated program, not anything particularly intelligent. This example illustrates that the definition of AI is fluid and dependent on the state of current research. In general, there are two ways to look at AI: artificial general intelligence and applications made possible by more intelligent programs. Artificial general intelligence (which is what the singularity would be considered) is a machine that that can think, learn, interpret information and take action from its findings. But, for reasons previously discussed, this is not yet a possibility. Most progress has been made in specific applications of AI through machine learning or neural networks (Lewis-Kraus). There are two approaches to AI: the old algorithm-driven method or the newer data-driven method. Take for example, the problem of image recognition, trying to teach a computer to recognize an image of a cat. The old way to do it would be to create an algorithm that specifically tells the program what characteristics to look for that define a cat, such as whiskers, two pointy ears, or four legs. However, this is not only time-consuming for the programmer to define all of these parameters but it also has limitations. If the program is thrown a picture of a dog, which also has four legs, it can incorrectly classify the image as a cat. The newer method takes a data-first approach using neural networks. Artificial neural networks are based on the human brain’s system of neurons.

Professor Cassel

English 1201

23 Apr. 2017

A Realistic Look at Artificial Intelligence

What do most people think of when they hear the term “artificial intelligence?” If the movies are to be believed, artificial intelligence is when robots take over the world, like in Terminator or The Matrix. Though these are just movies, many people still believe a harmful artificial intelligence (AI) singularity, which is a machine or program that possesses higher than human intelligence, is a strong possibility in the future. However, this belief is unfounded; it is spawned by media hype and a lack of understanding of the field of AI. Currently, AI research is nowhere near the creation of a general intelligence machine and is focused more on specialized tasks, such as image recognition. AI research offers many potential benefits, especially to the fields of software and medicine. Even though there are some practical problems to consider, AI will continue to progress, and its development will greatly contribute to advancing technology and the world.

Artificial intelligence is already improving many fields and will continue to offer even more benefits in the future. One such example is Google Translate. Google Translate is a product that is known for giving stilted, awkward translations that usually do not accurately portray how people speak in a given language. Recently, Google Brain, a branch of the company that specializes in machine learning, has overhauled Translate into an AI driven system. This has resulted in much smoother translations. Sundar Pichai, Google’s chief executive recently showcased this at a conference in London. Demonstrating with a Spanish-to-English translation by the old system, Pichai read aloud a fairly awkward sentence, “One is not what is for what he writes, but for what he has read.” Yet, the new AI translation read, “You are not what you write, but what you read” (Lewis-Kraus). This stark contrast shows the vast improvement that AI has already made in language translation. In addition to language, Google is researching how AI can improve a variety of other tasks. Its DeepMind project uses an AI system that learns how to play video games and recently beat the world champion in a game of Go (Dubhashi 44). In 2012, the company debuted its autonomous driverless cars, which can navigate on their own through traffic (Kaplan 38). Many other big technology companies are also taking an interest in AI, such as Facebook, Amazon, IBM, and Microsoft (Markoff). Many advances in AI have mainly been in the field of machine learning. In this specialized field, AI has improved tasks such as tracking objects, diagnosing diseases, and trading stocks (Kaplan 37). A machine learning program made by Stanford was able to discern new indicators for breast cancer by analyzing tumor scans (Philbeck). Although these tasks may seem unrelated at first, all of them are based around finding patterns in data. AI has proven to be much better at processing large amounts of data than humans and can save people a lot of time by taking over mundane tasks that involve analyzing data.

Some of the imagined risks about AI are attributable to a lack of knowledge. The notion of an AI singularity that will become self-aware and intentionally do harm to humans is overblown by the media. The statements of several respected scientific figures outside of the field, such as Elon Musk and Steven Hawking, have also lead credence to this unfounded idea. AI researchers that work within the field realize that the possibility is quite non-existent. Instead, they are more focused on the more practical tasks and challenges of AI (Kaplan 36). In addition, AI technology is so new that making such broad statements is premature. Unlike other threats such as climate change and gene editing where more research has been done and there is a much better understanding, AI research is relatively green, and it is too early to make a case for the AI singularity (Dubhashi 43-44). AI, like many emerging technologies, goes through hype cycles. AI research has typically gone through periods of extreme interest called “bubbles” and periods of a lack of interest called “winters.” During bubble periods, expectations tend to inflate the abilities of the new technologies and over exaggerate the possibilities, leading to misinformation (Cristianini). The bubble period and heightened interest has amplified the current media hype over an AI singularity.

In order to better describe how AI can help the future, a definition of AI and an explanation of its progress is warranted. Intelligence is a loose term, and the definition of it in AI has been well debated. For example, if Google Maps was introduced to someone in the 1970, it would certainly seem like a form of AI that is well-verse in direction giving. Yet today, Google Maps just seems like an automated program, not anything particularly intelligent. This example illustrates that the definition of AI is fluid and dependent on the state of current research. In general, there are two ways to look at AI: artificial general intelligence and applications made possible by more intelligent programs. Artificial general intelligence (which is what the singularity would be considered) is a machine that that can think, learn, interpret information and take action from its findings. But, for reasons previously discussed, this is not yet a possibility. Most progress has been made in specific applications of AI through machine learning or neural networks (Lewis-Kraus). There are two approaches to AI: the old algorithm-driven method or the newer data-driven method. Take for example, the problem of image recognition, trying to teach a computer to recognize an image of a cat. The old way to do it would be to create an algorithm that specifically tells the program what characteristics to look for that define a cat, such as whiskers, two pointy ears, or four legs. However, this is not only time-consuming for the programmer to define all of these parameters but it also has limitations. If the program is thrown a picture of a dog, which also has four legs, it can incorrectly classify the image as a cat. The newer method takes a data-first approach using neural networks. Artificial neural networks are based on the human brain’s system of neurons.

Fig. 1. A diagram of a simple neural network. It processes the data through the layers of neurons and delivers an output (Nielsen).

The brain takes in information, such as visual images, and through experience and trial-and-error, begins to see patterns and interprets the data. Much like the brain, neural networks can take many images of cats, and through trial-and-error, begin to see pattern in the images and classify the image correctly. This allows for a more accurate classification and saves time. Neural networks do not require the programmer to define what a cat is; the program learns from making its own patterns from the images (Lewis-Kraus). Neural networks are the advancement that has made it possible for AI to improve medicine, software, and other fields.

However, there are some potential problems as AI progresses. One is within warfare. The military has been using AI to improve many of its weapons systems, such as drones that are meant to eliminate targets. As AI is used to fight wars, ethical issues arise. For example, should AI systems be allowed to make decisions that use lethal force on people? Humans, when fighting wars, try to minimize loss of life. A machine may not think like humans and could kill just to “win” without taking the value of life into consideration if it is poorly designed. In addition, AI enhanced warfare risks unbalancing global power allocations. Much like how the introduction of nuclear weapons lead to a Cold War, AI weapons can lead to shifting balances in which countries have the best warfare technology. This can lead to power plays and a race to AI arms around the world (Philbeck). According the World Economic Forum, “An arms race in autonomous weapons systems is very likely in the near future. The international community should tackle this issue with the utmost urgency and seriousness because, once the first fully autonomous weapons are deployed, it will be too late to go back.” Another concern is that AI will take jobs away from people and disrupt the job market. However, this is not necessarily true. History has shown that the advent of automation did not lead to widespread unemployment. Hundreds of years ago, more than 90% of jobs were on farms; now only 2% of jobs are on farms. Automation and machines replaced those jobs on the farm with jobs in factories and business instead. In addition, the general standard of living today is better because of automation. Similarly, AI is automation that is driven by more intelligent machines. It will replace some jobs, but it will also create different jobs for people and also improve the overall standard of living. However, the transition between replacing jobs and creating jobs may cause difficulties for people during that time (Kaplan 37).

To mitigate the potential problems that AI poses, several solutions are proposed. One is to promote the goal of Friendly AI (Yudkowsky 12). Friendly AI means to design AI so that it works well with humans and cannot be easily abused for malicious purposes. In addition to good design, AI should be regulated as it becomes more prevalent. As Kaplan asks, “Should a robot be permitted to stand in line for you, put money in your parking meter to extend your time, use a crowded sidewalk to make deliveries, commit you to a purchase, enter into a contract, vote on your behalf, or take up a seat on a bus?” (38). Regulation and design go hand-in-hand. Humans need to design machines so that they will comply with our social conventions. Though there is currently little regulation for AI capabilities, it will be much needed in the future. The Pentagon has begun to discuss that humans should still control killing decisions rather than delegating that task to AI (Markoff). In addition ethical regulations, regulations are needed on data collection. Many AI products, such as Siri, collect personal data in order to tailor a better for consumers. However, privacy issues are a concern with this. AI can potentially violate privacy laws while collecting data. Thus, regulations on how, when, and where AI can collect data is needed (Cristianini). For future AI, regulations and good design are needed to prevent potential problems and make it easier for human to work with AI.

Artificial intelligence is certainly a complicated field, but it presents a wide range opportunities. The current improvements that AI have made in language, image processing, and medicine display only a small fraction of the potential that it has to offer. Neural networks will continue to provide improvements in data processing and improve the field of machine learning. AI does present some risks, particularly in warfare and job disruption, but these issues can be moderated through smart regulation and better design. As we begin to gain a better understanding of AI research, people and machines can work together to ensure a better future and focus on solving other practical challenges.

Works Cited

Cristianini, Nello. "Intelligence Reinvented." New Scientist, Vol. 232, No. 3097. 29 Oct. 2016: p. 37. EBSCOhost. Web. 10 Apr. 2017.

Dubhashi, Devdatt and Shalom Lappin. "AI Dangers: Imagined and Real." Communications of the ACM, Vol. 60, No. 2. Feb. 2017: 43-45. EBSCOhost. Web. 7 Apr. 2017.

Kaplan, Jerry. "Artificial Intelligence: Think Again." Communications of the ACM, Vol. 60, No. 1. Jan. 2017: 36-38. EBSCOhost. Web. 6 Apr. 2017.

Lewis-Kraus, Gideon. “The Great A.I. Awakening.” The New York Times, The New York Times, 14 Dec. 2016. Web. 9 Apr. 2017. <https://www.nytimes.com/2016/12/14/magazine/the-great-ai-awakening.html?_r=1>.

Markoff, John. "Devising Real Ethics for Artificial Intelligence." New York Times, Vol. 165, No. 57343. 02 Sept. 2016: B1. EBSCOhost. Web. 9 Apr. 2017.

Nielsen, Michael. Photograph. Determination Press, 2015. Web. 1 May 2017. <http://neuralnetworksanddeeplearning.com/chap1.html>.

Philbeck, Thomas, and Nicholas Davis. “3.2 Assessing the Risk of Artificial Intelligence.” Global Risks Report 2017, World Economic Forum, 2016. Web. 9 Apr. 2017. <http://reports.weforum.org/global-risks-2017/part-3-emerging-technologies/3-2-assessing-the-risk-of-artificial-intelligence/>.

Yudkowsky, Eliezer. “Artificial Intelligence as a Positive and Negative Factor in Global Risk.” Global Catastrophic Risks, Oxford University Press, 2008. PDF.

The brain takes in information, such as visual images, and through experience and trial-and-error, begins to see patterns and interprets the data. Much like the brain, neural networks can take many images of cats, and through trial-and-error, begin to see pattern in the images and classify the image correctly. This allows for a more accurate classification and saves time. Neural networks do not require the programmer to define what a cat is; the program learns from making its own patterns from the images (Lewis-Kraus). Neural networks are the advancement that has made it possible for AI to improve medicine, software, and other fields.

However, there are some potential problems as AI progresses. One is within warfare. The military has been using AI to improve many of its weapons systems, such as drones that are meant to eliminate targets. As AI is used to fight wars, ethical issues arise. For example, should AI systems be allowed to make decisions that use lethal force on people? Humans, when fighting wars, try to minimize loss of life. A machine may not think like humans and could kill just to “win” without taking the value of life into consideration if it is poorly designed. In addition, AI enhanced warfare risks unbalancing global power allocations. Much like how the introduction of nuclear weapons lead to a Cold War, AI weapons can lead to shifting balances in which countries have the best warfare technology. This can lead to power plays and a race to AI arms around the world (Philbeck). According the World Economic Forum, “An arms race in autonomous weapons systems is very likely in the near future. The international community should tackle this issue with the utmost urgency and seriousness because, once the first fully autonomous weapons are deployed, it will be too late to go back.” Another concern is that AI will take jobs away from people and disrupt the job market. However, this is not necessarily true. History has shown that the advent of automation did not lead to widespread unemployment. Hundreds of years ago, more than 90% of jobs were on farms; now only 2% of jobs are on farms. Automation and machines replaced those jobs on the farm with jobs in factories and business instead. In addition, the general standard of living today is better because of automation. Similarly, AI is automation that is driven by more intelligent machines. It will replace some jobs, but it will also create different jobs for people and also improve the overall standard of living. However, the transition between replacing jobs and creating jobs may cause difficulties for people during that time (Kaplan 37).

To mitigate the potential problems that AI poses, several solutions are proposed. One is to promote the goal of Friendly AI (Yudkowsky 12). Friendly AI means to design AI so that it works well with humans and cannot be easily abused for malicious purposes. In addition to good design, AI should be regulated as it becomes more prevalent. As Kaplan asks, “Should a robot be permitted to stand in line for you, put money in your parking meter to extend your time, use a crowded sidewalk to make deliveries, commit you to a purchase, enter into a contract, vote on your behalf, or take up a seat on a bus?” (38). Regulation and design go hand-in-hand. Humans need to design machines so that they will comply with our social conventions. Though there is currently little regulation for AI capabilities, it will be much needed in the future. The Pentagon has begun to discuss that humans should still control killing decisions rather than delegating that task to AI (Markoff). In addition ethical regulations, regulations are needed on data collection. Many AI products, such as Siri, collect personal data in order to tailor a better for consumers. However, privacy issues are a concern with this. AI can potentially violate privacy laws while collecting data. Thus, regulations on how, when, and where AI can collect data is needed (Cristianini). For future AI, regulations and good design are needed to prevent potential problems and make it easier for human to work with AI.

Artificial intelligence is certainly a complicated field, but it presents a wide range opportunities. The current improvements that AI have made in language, image processing, and medicine display only a small fraction of the potential that it has to offer. Neural networks will continue to provide improvements in data processing and improve the field of machine learning. AI does present some risks, particularly in warfare and job disruption, but these issues can be moderated through smart regulation and better design. As we begin to gain a better understanding of AI research, people and machines can work together to ensure a better future and focus on solving other practical challenges.

Works Cited

Cristianini, Nello. "Intelligence Reinvented." New Scientist, Vol. 232, No. 3097. 29 Oct. 2016: p. 37. EBSCOhost. Web. 10 Apr. 2017.

Dubhashi, Devdatt and Shalom Lappin. "AI Dangers: Imagined and Real." Communications of the ACM, Vol. 60, No. 2. Feb. 2017: 43-45. EBSCOhost. Web. 7 Apr. 2017.

Kaplan, Jerry. "Artificial Intelligence: Think Again." Communications of the ACM, Vol. 60, No. 1. Jan. 2017: 36-38. EBSCOhost. Web. 6 Apr. 2017.

Lewis-Kraus, Gideon. “The Great A.I. Awakening.” The New York Times, The New York Times, 14 Dec. 2016. Web. 9 Apr. 2017. <https://www.nytimes.com/2016/12/14/magazine/the-great-ai-awakening.html?_r=1>.

Markoff, John. "Devising Real Ethics for Artificial Intelligence." New York Times, Vol. 165, No. 57343. 02 Sept. 2016: B1. EBSCOhost. Web. 9 Apr. 2017.

Nielsen, Michael. Photograph. Determination Press, 2015. Web. 1 May 2017. <http://neuralnetworksanddeeplearning.com/chap1.html>.

Philbeck, Thomas, and Nicholas Davis. “3.2 Assessing the Risk of Artificial Intelligence.” Global Risks Report 2017, World Economic Forum, 2016. Web. 9 Apr. 2017. <http://reports.weforum.org/global-risks-2017/part-3-emerging-technologies/3-2-assessing-the-risk-of-artificial-intelligence/>.

Yudkowsky, Eliezer. “Artificial Intelligence as a Positive and Negative Factor in Global Risk.” Global Catastrophic Risks, Oxford University Press, 2008. PDF.